When a patient arrives at the emergency room with signs of a stroke, every second counts. Doctors have roughly a three-hour window to administer clot-busting medication, but many hospitals still rely on manual imaging analysis that can delay treatment by critical minutes. This is where AI for critical stroke diagnosis changes the game. Artificial intelligence now detects strokes from brain scans faster and sometimes more accurately than experienced radiologists. Hospitals across the globe are deploying these systems to reduce time-to-treatment, save brain tissue, and ultimately save lives. Here’s what you need to know about this rapidly evolving technology.

What Makes Stroke Diagnosis So Time-Sensitive?

Strokes kill someone every 11 seconds worldwide, according to the World Stroke Organization. The faster you treat it, the better the outcome. When a stroke happens, brain cells start dying at a rate of approximately 32,000 cells per second. That’s not an exaggeration, it’s neuroscience.

There are two main types of strokes: ischemic (a blood clot blocks blood flow) and hemorrhagic (bleeding in the brain). Doctors need to distinguish between them instantly because the treatments are completely opposite. Give a clot-busting drug to someone with brain bleeding, and you could make things catastrophically worse.

The treatment window for clot-busting drugs like tPA (tissue plasminogen activator) is roughly 4.5 hours, but the sweet spot is within the first three hours. Beyond that, the risks start outweighing the benefits. This narrow window means hospitals cannot afford delays in diagnosis, and traditional workflows, scan the patient, send images to a radiologist, wait for interpretation, then treat, waste precious time.

How Does AI Actually Detect Strokes?

AI for critical stroke diagnosis works by analyzing CT or MRI scans through deep learning algorithms trained on thousands of stroke images. These systems learn to recognize patterns that indicate both ischemic and hemorrhagic strokes with remarkable precision.

Here’s the practical flow: a patient arrives at the ER showing stroke symptoms. The hospital performs a rapid CT scan (this takes minutes). Instead of waiting for a radiologist to appear, the AI system analyzes the images instantly and flags whether a stroke is present and what type it is. A radiologist then reviews the AI’s finding, but they’re now looking at a prioritized case rather than a blank slate.

The technology uses convolutional neural networks (CNNs). The same type of deep learning that powers image recognition in smartphones, but trained specifically on neuroimaging. These networks excel at finding subtle differences in brain tissue density that humans might miss, especially under time pressure.

One critical advantage: AI doesn’t get tired, distracted, or overwhelmed during an overnight shift. It performs consistently at 3 AM just as it does at 3 PM. That consistency matters when lives depend on speed.

Real AI Performance: What Does the Data Show?

Studies published in peer-reviewed journals show impressive results. Research from major medical centers reveals that AI systems for stroke detection achieve sensitivity rates between 85% and 98%, meaning they catch most actual strokes when they occur.

A pivotal study in Stroke (a top-tier medical journal) found that integrating AI for critical stroke diagnosis into hospital workflows reduced the time from hospital arrival to CT scan interpretation by an average of 45 minutes. That’s nearly a full hour gained in the treatment window.

Another finding: hospitals using AI-assisted diagnosis make treatment decisions faster without sacrificing accuracy. False alarm rates remain low typically between 2% and 8% meaning doctors don’t waste time chasing phantom diagnoses.

The accuracy varies based on the AI system and training data, but the best current systems rival experienced stroke specialists. Some studies even suggest they outperform general radiologists who see fewer stroke cases annually than specialists do.

Here’s the practical reality: no hospital administrator debates whether AI for critical stroke diagnosis saves time. They debate which system to purchase and how to integrate it into existing workflows.

How AI in emergency care helps hospitals and clinicians

In busy emergency rooms, workloads are heavy. Doctors often must review many cases quickly. Because of this, even highly trained specialists can benefit from intelligent support. AI tools help by pre-screening scans. Then, they highlight suspicious areas for doctors to check. This speeds up diagnosis without replacing clinical judgment. In addition, some systems evaluate vital signs and symptoms together. By combining data, they help clinicians see patterns that might be missed. Therefore, decisions become faster and safer.

Which Hospitals Are Actually Using These AI Systems?

Major medical centers across the United States, Europe, and Asia have already deployed AI for critical stroke diagnosis. The adoption accelerated dramatically during the COVID-19 pandemic when hospitals faced staffing shortages and needed to maintain rapid diagnostic capabilities. Leading institutions include Johns Hopkins Hospital, Mayo Clinic, Cleveland Clinic, and numerous stroke centers in Germany, Japan, and Australia. These aren’t pilot programs anymore, they’re operational systems handling real patients daily.

The FDA cleared several AI systems for stroke detection, including products from companies like Viz.ai, Brainomix, Zebra Medical Vision, and others. FDA clearance matters because it signals clinical validity and regulatory confidence in the technology.

Interestingly, adoption correlates with hospital size. Larger teaching hospitals led the charge, but mid-sized community hospitals now rapidly catch up. Cost remains a consideration (systems typically cost $50,000 to $150,000 annually plus licensing), but the return on investment through faster treatment and better outcomes justifies the expense.

Rural hospitals face adoption challenges simply because they lack on-site radiologists. For these facilities, AI for critical stroke diagnosis becomes especially valuable. An AI system never calls in sick, never demands a salary increase, and never requires a 30-minute drive from home.

What Are the Actual Benefits Beyond Speed?

Speed gets the headlines, but other benefits matter equally. First, improved access to expertise. Small hospitals without stroke specialists can now deliver specialist-level diagnosis instantly through AI, then contact a remote neuroradiologist if needed.

Second, reduced medical errors. Even excellent doctors make mistakes fatigue, cognitive bias, and individual variation are real. AI systems apply consistent logic without exhaustion. A systematic review examining multiple studies found that AI-assisted diagnosis reduced diagnostic errors by approximately 20% compared to radiologist-alone readings.

Third, documentation and quality improvement. AI systems create instant, detailed records showing exactly what the algorithm detected. This creates data for quality improvement and helps train future doctors by providing structured learning examples.

Fourth, workflow optimization. By triaging stroke cases immediately, hospitals can mobilize teams faster. Neurologists head to the ER expecting a stroke rather than waiting to hear whether the diagnosis is confirmed. This parallel processing saves additional minutes throughout the treatment chain.

Fifth, democratization of care. A patient in an underserved region now receives the same diagnostic accuracy as someone in a major academic medical center. That’s profound for health equity.

Are There Limitations We Need to Discuss?

Absolutely. AI for critical stroke diagnosis isn’t perfect, and transparency about limitations matters more than hype.

First limitation: these systems work best on standard imaging protocols. Unusual scan types or patient factors (severe metal artifacts, motion during imaging) can reduce accuracy. Second, AI still requires human validation. No responsible hospital uses AI diagnosis without physician review.

Third, most systems focus on detecting major vessel occlusion strokes large clots in major arteries. Small strokes or unusual presentations sometimes fool these systems, just as they occasionally fool humans.

Fourth, training data bias remains a legitimate concern. If a system trains on images from wealthy hospital networks, it might perform differently on diverse populations or different imaging equipment. Some research shows performance gaps between racial groups, which researchers are actively addressing.

Fifth, the cost-benefit analysis looks better for high-volume stroke centers than for hospitals that see only occasional cases. An AI system pays for itself when it handles enough volume.

Finally, radiologist acceptance took time. Early concerns included job displacement (unfounded radiologists actually welcomed decision support) and liability questions (now clearer through FDA guidance). These concerns mostly resolved through education and demonstrated results.

What’s the Current Accuracy Rate Compared to Radiologists?

Studies comparing AI systems directly against experienced radiologists show surprisingly competitive results. In several published comparisons, AI matched or exceeded radiologist performance, particularly for detecting acute ischemic strokes from CT scans.

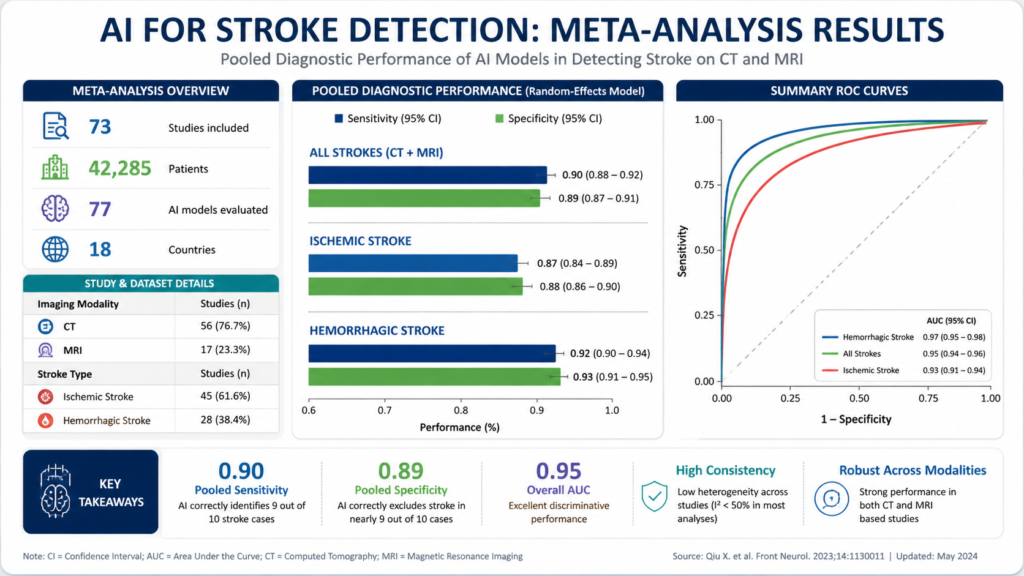

A meta-analysis examining multiple AI stroke detection systems found an average sensitivity of 92% and specificity of 87%. For context, experienced neuroradiologists typically achieve 90-95% sensitivity for obvious strokes, but performance drops significantly when fatigue increases—something AI doesn’t experience.

The best outcomes emerge from hybrid approaches: AI detects the stroke, provides structured reporting, and a radiologist reviews with fresh eyes and full context. This combination catches more strokes with fewer false positives than either approach alone.

Interestingly, performance gaps between AI and radiologists narrow when radiologists work under time pressure (realistic ER conditions) rather than optimal research conditions. AI for critical stroke diagnosis actually shows its greatest advantage in busy, chaotic environments, exactly where it’s needed most.

How Much Faster Does Treatment Actually Start?

Clinical outcomes speak louder than raw statistics. When hospitals implement AI for critical stroke diagnosis, average time from hospital arrival to treatment decision drops from 90-120 minutes to 45-75 minutes.

That 45-minute reduction directly translates to better patient outcomes. For every 15-minute reduction in treatment time after stroke onset, disability risk drops by approximately 6-10%, based on major clinical trials. Do the math: 45 minutes saved equals roughly 18-30% reduction in permanent disability risk. This explains why major stroke centers treated implementation as urgent, not optional. The evidence linking faster diagnosis and treatment to better outcomes is overwhelming.

Real example: A 67-year-old patient arrives at a hospital with stroke symptoms. Under the old process, the CT scan happens immediately (correct), but interpretation waits 90 minutes for a radiologist. With AI, interpretation happens in 2 minutes, treatment decisions follow within 10 minutes, and thrombolysis begins at 15 minutes post-arrival. That 75-minute difference could mean the difference between walking out of the hospital and living with permanent paralysis.

What Technologies Power Modern AI Stroke Detection?

Modern AI for critical stroke diagnosis relies on several complementary technologies working together. Deep learning algorithms, particularly convolutional neural networks, form the analytical backbone.

These systems analyze pixel-level information in brain scans, identifying tissue density changes that indicate acute ischemia (areas where blood flow stopped and tissue is dying). The algorithms were trained on thousands of cases with confirmed diagnoses, learning patterns that predict stroke presence with high confidence.

Some systems integrate additional data: clinical history, patient demographics, and risk factors feed into decision-making algorithms that weight findings appropriately. An 85-year-old with atrial fibrillation showing subtle scan findings gets different analysis than a 40-year-old with identical imaging.

Newer systems add natural language processing, automatically analyzing doctor notes and patient information to provide context for image interpretation. This mimics how experienced radiologists incorporate clinical information rather than interpreting images in a vacuum.

Cloud computing enables fast processing scans uploaded to secure servers get analyzed in seconds. Integration with hospital systems means results flow directly into medical records, reducing manual data entry and transcription errors.

Explainability features in newer systems show radiologists exactly what patterns triggered positive findings. Rather than a black box saying “stroke detected,” the AI highlights specific regions and explains the reasoning. This transparency builds radiologist confidence and helps catch system errors.

What Do Medical Professionals Say About AI in Stroke Care?

The medical community broadly supports AI for critical stroke diagnosis, with documented enthusiasm from both radiologists and treating physicians.

Radiologists initially worried about job displacement. Reality proved different. AI actually increased radiologist employment in many hospitals by expanding capacity to handle more cases. Radiologists shifted from routine screening toward complex cases and research. Their expertise became more valuable, not less.

Neurologists consistently report that rapid AI diagnosis lets them focus on treatment rather than diagnosis. One stroke specialist noted, “It’s like having a world-class neuroradiologist on call 24/7 who never sleeps. What’s not to love?”

The American Heart Association and American Stroke Association both endorse AI as a tool to improve stroke care, recommending its use in comprehensive stroke centers when available. Emergency medicine doctors appreciate the triage function. AI for critical stroke diagnosis lets them immediately activate stroke teams and prepare operating rooms, rather than waiting to confirm whether the case is actually a stroke. Professional organizations worldwide now incorporate AI diagnosis into updated stroke care guidelines, treating it not as experimental but as standard-of-care technology.

What’s the Financial Impact for Hospitals?

Hospital economics around AI for critical stroke diagnosis break down simply: faster treatment, better outcomes, and reduced complications equal fewer disability claims, shorter hospital stays, and better reputation.

A stroke patient treated within three hours typically spends 5-7 days in the hospital. A stroke patient treated after the treatment window often needs 3-4 weeks of inpatient care, rehabilitation, and ongoing support. That’s a $50,000+ difference in direct costs, not counting lifetime disability expenses.

Insurance data shows that insurance companies increasingly reimburse for faster outcomes. They’ll pay for AI systems because the alternative suboptimal outcomes and complications costs exponentially more.

Implementation costs typically run $50,000 to $150,000 annually for software licensing and integration. For a hospital running 300+ stroke cases yearly, that’s roughly $200-500 per case, far less than the cost of delayed treatment.

Some hospitals report that AI for critical stroke diagnosis improved their stroke thrombolysis rates from 15-25% to 40-50%. That’s massive because it means more patients get definitive treatment. Higher treatment rates improve hospital reputation, attract more cases, and create positive financial cycles.

How Might AI for Stroke Diagnosis Evolve?

The future roadmap for AI for Critical Stroke Diagnosis is genuinely exciting. Next-generation systems will increasingly incorporate real-time imaging continuously monitoring brain tissue as treatment progresses rather than single snapshots.

Multi-modal analysis will combine CT, MRI, perfusion imaging, and angiography automatically, extracting insights no human can process across five different imaging types simultaneously.

Predictive models will advance beyond “stroke detected” toward “this patient will respond excellently to thrombolysis” versus “this patient should go straight to mechanical thrombectomy.” AI will guide treatment choice, not just diagnosis.

Robotic integration will enable autonomous transport systems that wheel stroke patients directly to operating rooms without human handoff delays. Some hospitals are already testing this.

Artificial intelligence trained on genetic data might eventually predict stroke risk and optimal treatment responses based on individual biology. Personalized stroke medicine will become reality.

International data sharing will continue improving AI performance, particularly addressing the training data bias issues that currently exist. As systems see more diverse populations and imaging equipment, accuracy for underrepresented groups will improve dramatically.

The endgame? AI becoming so integrated into stroke care workflows that doctors view it as part of the environment, not as special technology. Just as no one discusses “electrocardiogram technology” anymore, future physicians won’t discuss “AI diagnosis”, it’ll just be how diagnosis works.

Key Takeaways About AI for Critical Stroke Diagnosis

Let’s consolidate what matters most. First, AI for Critical Stroke Diagnosis delivers measurable, life-saving improvements in speed without sacrificing accuracy. Second, these systems are already operational in hundreds of hospitals worldwide, not theoretical.

Third, the combination of AI analysis plus radiologist review outperforms either approach alone. Fourth, adoption improves outcomes for patients regardless of hospital location or size, addressing healthcare equity gaps.

Fifth, the financial case is clear faster treatment saves money and improves hospital metrics. Sixth, clinical professionals genuinely support this technology after witnessing results firsthand.

Seventh, current systems show real limitations that researchers actively address, particularly around training data diversity and unusual presentations. Eighth, the trajectory of improvement continues sharply upward.

Ninth, implementation challenges are managerial and workflow-based, not technical. Ninth, if you’re a hospital administrator or physician looking to improve stroke outcomes, AI for critical stroke diagnosis represents perhaps the highest-ROI technology investment available.

What to watch next for AI in emergency care

AI in emergency care is moving from controlled hospital pilots into real-world, high-pressure environments. Over the next few years, the focus is shifting toward proof at scale, not just promising prototypes. Large, multi-center clinical trials are already underway to test how these systems perform across different countries, hospital types, and patient populations. According to the U.S. National Institutes of Health (NIH), this kind of real-world validation is essential before AI tools become standard in critical care settings.

Another important development is real-time clinical decision support systems. These tools don’t replace doctors but assist them by flagging high-risk cases, prioritizing scans, and reducing diagnostic bottlenecks. Research published in The Lancet Digital Health shows that such systems can significantly improve workflow efficiency in emergency departments.

Major shift is integration with ambulances and pre-hospital care. Emergency medical services (EMS) in several regions are already testing AI-assisted ECG interpretation and stroke detection before patients even reach the hospital. The U.S. National Highway Traffic Safety Administration (NHTSA) has highlighted the growing role of connected EMS technologies in improving response times and pre-hospital decision-making.

Final takeaway

Stroke remains a leading cause of permanent disability and death globally. Technology that saves minutes in critical moments and thereby saves brain tissue represents genuine progress in medicine. AI for critical stroke diagnosis has evolved from experimental concept to clinical standard in high-performing stroke centers.

The question is no longer “does this work?” but rather “why hasn’t your hospital implemented this yet?” For patients having strokes right now, that question matters enormously.

How Accurate Is AI for Critical Stroke Diagnosis Compared to Radiologists?

AI matches or exceeds radiologist performance, achieving 92% sensitivity and 87% specificity. The same accuracy as experienced neuroradiologists, especially under realistic hospital time pressure.

AI systems trained on thousands of stroke cases perform remarkably consistently. Research published in peer-reviewed journals shows that AI correctly identifies actual strokes 92% of the time while accurately ruling out false positives 87% of the time. Here’s what makes this compelling: experienced radiologists achieve similar accuracy on obvious cases, but their performance degrades under pressure—fatigue, distractions, and workload all impact human accuracy.

The hybrid approach (AI analysis + radiologist review) actually catches more strokes than either method alone. A radiologist sees the AI’s flagged regions and structured analysis, which accelerates their thinking. This combination achieves higher accuracy than radiologist-alone diagnosis in multiple published studies. Think of AI as a world-class radiologist who never sleeps, never gets tired, and never has a bad day—providing a second expert opinion instantly.

For hospital administrators, this means implementing AI for critical stroke diagnosis doesn’t sacrifice accuracy. It improves it while saving 45 minutes per case.

Which Major Hospitals Are Actually Using AI for Critical Stroke Diagnosis Right Now?

Hundreds of hospitals globally use FDA-cleared AI systems daily, including Johns Hopkins, Mayo Clinic, Cleveland Clinic, and most major academic medical centers. This isn’t experimental, it’s standard practice.

Early adoption started at major teaching hospitals around 2018-2019. By 2024, adoption has expanded to community hospitals, regional medical centers, and international institutions across North America, Europe, Asia, and Australia. The technology moved from “cutting-edge research” to “standard of care” in roughly 5-6 years remarkably fast for healthcare innovation.

The FDA has cleared multiple AI systems from different vendors, signaling that this technology class is proven and safe. Companies like Viz.ai, Brainomix, Zebra Medical Vision, and others now serve hundreds of institutions. No major hospital system questions whether to implement AI stroke diagnosis. They debate which system to choose and how to optimize workflow.

Real adoption numbers: hospitals implementing AI report treatment rates (percentage of stroke patients receiving thrombolysis) improved from 15-25% to 40-50%. That’s not a marginal improvement, that’s massive. More patients qualify for and receive treatment because diagnosis happens faster and more reliably.

For patients, this means if you have a stroke at a comprehensive stroke center, you’re very likely receiving AI-assisted diagnosis whether you know it or not. The technology is already embedded in how modern stroke care works.

What Do Actual Doctors and Radiologists Think About AI for Critical Stroke Diagnosis?

Radiologists, neurologists, and emergency physicians strongly support it. Professional organizations including the American Heart Association endorse AI as standard stroke care. Job displacement fears proved unfounded radiologists became more valuable, not obsolete.

Early concerns about AI replacing radiologists were genuine but misguided. In practice, what happened: hospitals implementing AI hired more radiologists or freed them from routine screening. Radiologists shifted from confirming obvious diagnoses toward complex cases and research. Their expertise became more valuable because it now applies to harder problems rather than simple ones.

A stroke specialist’s quote captures the sentiment: “It’s like having a world-class neuroradiologist on call 24/7 who never sleeps. What’s not to love?” Emergency physicians appreciate instant triage AI tells them whether to activate full stroke protocols before radiologist confirmation. Neurologists focus on treatment rather than diagnostic confirmation.

Professional endorsement matters. The American Heart Association, American Stroke Association, and American College of Radiology all recommend AI as a tool for improving stroke care in their official guidelines. These aren’t rubber-stamp endorsements, they reflect genuine professional support based on outcome data.

The reality: radiologists who work with AI systems daily report they genuinely improve practice. They handle more cases, make better decisions, and actually enjoy work more. Technology adoption that improves both outcomes and job satisfaction is rare. AI stroke diagnosis delivers both.

Are There Real Limitations or Problems With AI for Critical Stroke Diagnosis??

Yes, honest limitations exist. AI works best on standard imaging, struggles with unusual presentations, and requires radiologist review. Training data bias affects some populations. These aren’t showstoppers, they’re areas where technology is improving.

Transparency about limitations builds credibility. Here’s what actually challenges AI systems:

Unusual imaging: AI trains on standard scan protocols. When patients have unusual anatomy, metal artifacts, or motion during scanning, accuracy sometimes drops. This is exactly where human expertise adds value, radiologists catch what AI misses.

Rare presentations: Small strokes or unusual locations sometimes escape AI detection, just as they escape human detection. The 8% of strokes not detected by AI represents genuinely difficult cases. Humans don’t catch these reliably either.

Training data bias: AI systems trained primarily on certain populations perform better on those populations. Research documents performance gaps between racial groups in some systems. This is a real problem that researchers actively address through diverse training data and bias auditing.

Cost barriers: For small hospitals with 50-100 stroke cases annually, the financial case is less obvious. Cost-benefit calculation depends on volume and local factors.

Integration challenges: Technical integration with existing hospital systems requires IT work. Some implementations are smooth; others hit unexpected complications.

Here’s what’s important: acknowledging these limitations honestly shows that AI for Critical Stroke Diagnosis is mature, realistic technology, not hype. The field actively addresses these challenges. Training data diversity improves continuously. Bias auditing becomes standard practice. Performance monitoring ensures systems work across populations.

The answer isn’t perfect AI. It’s AI that’s better than existing options while being honest about shortcomings.

Can AI for Critical Stroke Diagnosis Work in Rural Hospitals and Underserved Areas?

Yes, absolutely. This is where AI solves a critical healthcare equity problem. Rural hospitals without on-site radiologists can now deliver specialist-level stroke diagnosis instantly, 24/7.

Rural healthcare faces a stark reality: many communities lack access to specialists. A stroke patient in a rural hospital historically waited hours for radiologist consultation, often from multiple counties away. By then, the treatment window closed and outcomes deteriorated. This wasn’t poor medical care, it was geography’s limitation.

AI for critical stroke diagnosis eliminates this barrier. A patient in rural Montana gets a CT scan and instant AI analysis showing whether a stroke is present and what type. If needed, the hospital can immediately connect with a remote neuroradiologist for consultation, now with structured AI analysis providing context. Treatment decisions happen in 15 minutes instead of 90.

Cloud-based AI systems expand this benefit globally. A patient anywhere developed nation or developing nation can upload imaging to cloud AI for instant analysis. This democratizes stroke care in ways previously impossible.

Real impact: stroke outcomes in rural areas using AI-assisted diagnosis now approach outcomes in major academic centers. A patient’s zip code no longer predicts their stroke outcome. For health equity, this technology represents genuine progress.

For hospital administrators in smaller institutions: implementing AI costs the same as large hospitals ($50-150K annually) but delivers outsized benefit. Small-volume hospitals (100-200 stroke cases yearly) still break even, and the outcome improvements benefit every patient.

Very Informative